Category: Blog

GOV. EDWARDS, PRESIDENT TATE OPEN DOORS TO NEW LSU CYBERSECURITY OPERATIONS CENTER, PROTECTION MODEL FOR LOUISIANA

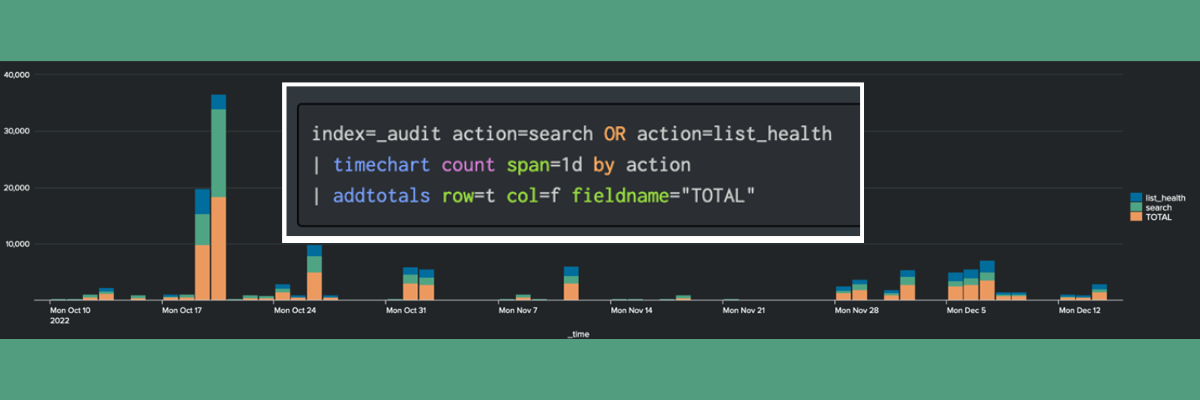

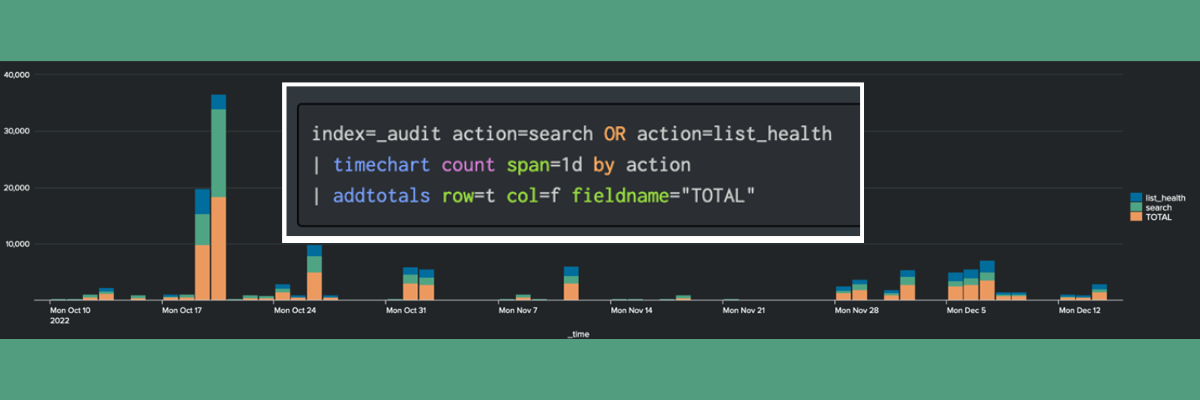

Time Range for Searching Splunk Events

Guidance for Headless Content Management Design: Key Design Strategies for Headless Implementations of Oracle Content Management Cloud

- Blog

- Security Bulletin

- Splunk

TekStream Security Bulletin: Akira on Cisco Adaptive Security Appliance (ASA) VPN

How to Avoid Skipped Searches in Splunk Cloud

A Beginner’s Guide to Splunk App/User Context Configuration Files

Creating Chart Overlays and Annotations (Flags) in a TimeChart

Security Bulletin: Microsoft Zero-Day Office & Windows Vulnerability

Using clientName to Simplify Forwarder Management