JSON Structured Data & the SEDCMD in Splunk

By: Khristian Pena | Splunk Consultant

Have you worked with structured data that is not following its structure? Maybe your JSON data has a syslog header. Maybe your field values have an extra quote, colon, or semicolon and your application team cannot remediate the issue. Today, we’re going to discuss a powerful tool for reformatting your data so automatic key-value fields are extracted at search-time. These field extractions utilize KV_MODE in props.conf to automatically extract fields for structured data formats like JSON, CSV, and from table-formatted events.

Props.conf

[<spec>]

KV_MODE = [none|auto|auto_escaped|multi|json|xml]

This article will focus on the JSON structure and walk through some ways to validate, remediate and ingest this data using the SEDCMD. You may have used the SEDCMD to anonymize, or mask sensitive data (PHI,PCI, etc) but today we will use it to replace and append to existing strings.

JSON supports two widely used (amongst programming languages) data structures.

- A collection of name/value pairs. Different programming languages support this data structure in different names. Like object, record, struct, dictionary, hash table, keyed list, or associative array.

- An ordered list of values. In various programming languages, it is called as array, vector, list, or sequence.

Syntax:

An object starts with an open curly bracket { and ends with a closed curly bracket } Between them, a number of key value pairs can reside. The key and value are separated by a colon : and if there are more than one KV pair, they are separated by a comma ,

{

“Students“: [

{ “Name“:”Amit Goenka” ,

“Major“:”Physics” },

{ “Name“:”Smita Pallod” ,

“Major“:”Chemistry” },

{ “Name“:”Rajeev Sen” ,

“Major“:”Mathematics” }

]

}

An Array starts with an open bracket [ and ends with a closed bracket ]. Between them, a number of values can reside. If more than one values reside, they are separated by a comma , .

[

{

“name“: “Bidhan Chatterjee”,

“email“: “bidhan@example.com”

},

{

“name“: “Rameshwar Ghosh”,

“email“: “datasoftonline@example.com”

}

]

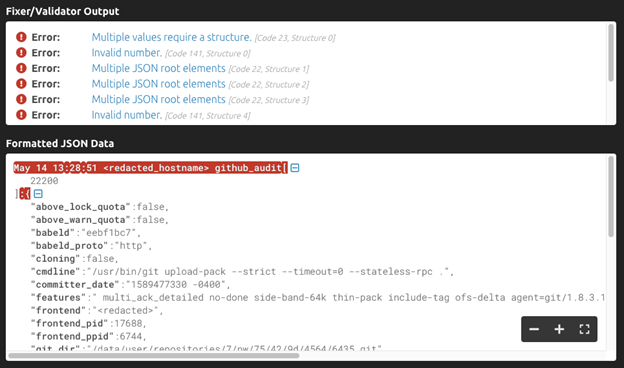

JSON Format Validation:

Now that we’re a bit more familiar with the structure Splunk expects to extract from, let’s work with a sample. The sample data is JSON wrapped in a syslog header. While this data can be ingested as is, you will have to manually extract each field if you choose to not reformat it. You can validate the structure by copying this event to https://jsonformatter.curiousconcept.com/ .

Sample Data:

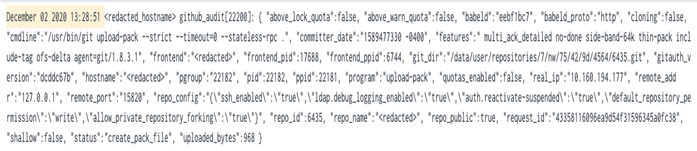

May 14 13:28:51 <redacted_hostname> github_audit[22200]: { “above_lock_quota”:false, “above_warn_quota”:false, “babeld”:”eebf1bc7″, “babeld_proto”:”http”, “cloning”:false, “cmdline”:”/usr/bin/git upload-pack –strict –timeout=0 –stateless-rpc .”, “committer_date”:”1589477330 -0400″, “features”:” multi_ack_detailed no-done side-band-64k thin-pack include-tag ofs-delta agent=git/1.8.3.1″, “frontend”:”<redacted>”, “frontend_pid”:17688, “frontend_ppid”:6744, “git_dir”:”/data/user/repositories/7/nw/75/42/9d/4564/6435.git”, “gitauth_version”:”dcddc67b”, “hostname”:”<redacted>”, “pgroup”:”22182″, “pid”:22182, “ppid”:22181, “program”:”upload-pack”, “quotas_enabled”:false, “real_ip”:”10.160.194.177″, “remote_addr”:”127.0.0.1″, “remote_port”:”15820″, “repo_config”:”{\”ssh_enabled\”:\”true\”,\”ldap.debug_logging_enabled\”:\”true\”,\”auth.reactivate-suspended\”:\”true\”,\”default_repository_permission\”:\”write\”,\”allow_private_repository_forking\”:\”true\”}”, “repo_id”:6435, “repo_name”:”<redacted>”, “repo_public”:true, “request_id”:”43358116096ea9d54f31596345a0fc38″, “shallow”:false, “status”:”create_pack_file”, “uploaded_bytes”:968 }

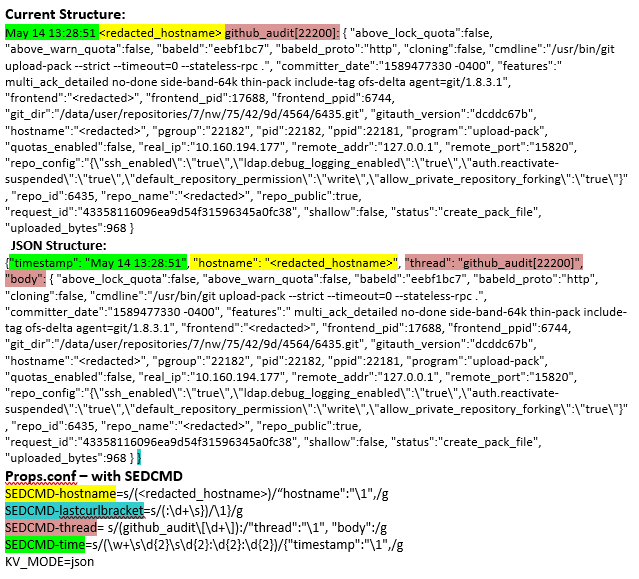

The errors are noted and highlighted below:

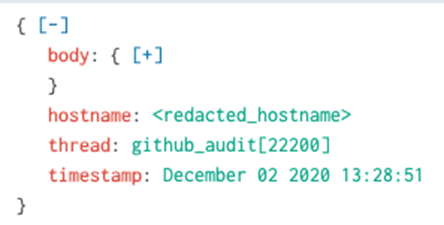

As we can see, the timestamp, hostname and thread field are outside of the JSON object.

Replace strings in events with SEDCMD

You can use the SEDCMD method to replace strings or substitute characters. This must be placed on the parsing queue prior to index time. The syntax for a sed replace is:

SEDCMD-<class> = s/<regex>/\1<replacement>/flags

- <class> is the unique stanza name. This is important because these are applied in ABC order

- regexis a Perl language regular expression

- replacementis a string to replace the regular expression match.

- flagscan be either the letter g to replace all matches or a number to replace a specified match.

- \1 – use this flag to insert the string back into the replacement

How To Test in Splunk:

Copy the data sample text into a notepad file and upload using Splunk’s built in Add Data feature under Settings to test. Try out each SEDCMD and note the difference in the data structure for each attribute.

BEFORE:

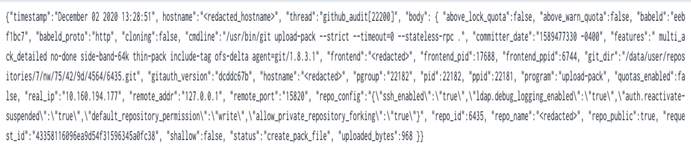

AFTER:

Props.conf – Search Time Field Extraction

[<spec>]

KV_MODE = json

Want to learn more about JSON structured data & the SEDCMD in Splunk? Contact us today!